Most of our data in applications is stored in relational databases, although there are other ways of storing data I guess about 75% of applications use RDBMS. While using these databases we write .Net code which functions correctly but may incorrectly write, read or handle data in the run time. e.g. I have found a common case where developers tend not to use some of the features built into the RDBMS system under the covers, and concentrate so much on getting the application tier right, that they fall into pitfalls that arise out of data handling , One such issue is concurrency of data, It is one of those things developers don’t look at when they hit a data handling issue. a consequence is a lack of transaction capabilities , sometimes they don’t analyse that the root cause could be data handling. Agree or deny I at the least cannot think of applications used for business not to have transactions. More often than not business processes in a application translate into a transaction block or unit.

Concurrency is one such issue which can be controlled and exercised. It can be in an optimistic or pessimistic mechanism, whatever be the mode exercised it is important to understand the concept and how it can be exercised.

Optimistic concurrency lets the last update succeed and can make data viewed by the end user dirty, if a update happened since data was displayed to the user. It is the applications responsibility to determine stale data and then decide to perform an update based on the decision it takes. Optimistic concurrency is used extensively in .NET to address the needs of mobile

and disconnected applications, where locking data rows for prolonged periods of time would be infeasible. Also, maintaining record locks requires a persistent connection to the database server, which is not possible in disconnected applications

In the pessimistic scenario, read locks are obtained by the consumer of the data and any updates to the same data are prevented, Pessimistic concurrency requires a persistent connection to the database and is not a scalable option when users are interacting with data, because records might be

locked for relatively large periods of time.

These are the mechanisms you adopt. To implement this in a data tier of a .Net application developers use transactions

Most of today’s applications need to support transactions for maintaining the integrity of a system’s data. There are several approaches to transaction management; however, each approach fits into one of two basic programming models:

Manual transactions. You write code that uses the transaction support features of either ADO.NET or Transact-SQL directly in your component code or stored procedures, respectively.

Automatic transactions. Using Enterprise Services (COM+), you add declarative attributes to your .NET classes to specify the transactional requirements of your objects at run time. You can use this model to easily configure multiple components to perform work within the same transaction.

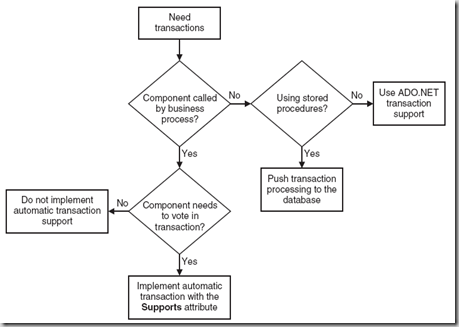

To decide if u want to use transactions in SQL code, ADO.Net or Automatic transaction I found a simple decision tree which allows you to make a decision on which method to use to implement transactions, of course you make the decision if you need to use transactions in .Net